Migrating Oracle to Amazon Redshift

Case Study

To Increase Scalability and reduce Cost

Business Case

To migrate the existing Oracle data warehouse to a new data-warehouse solution using Amazon Redshift to improve query and data load performance and to increase scalability and reduce cost.

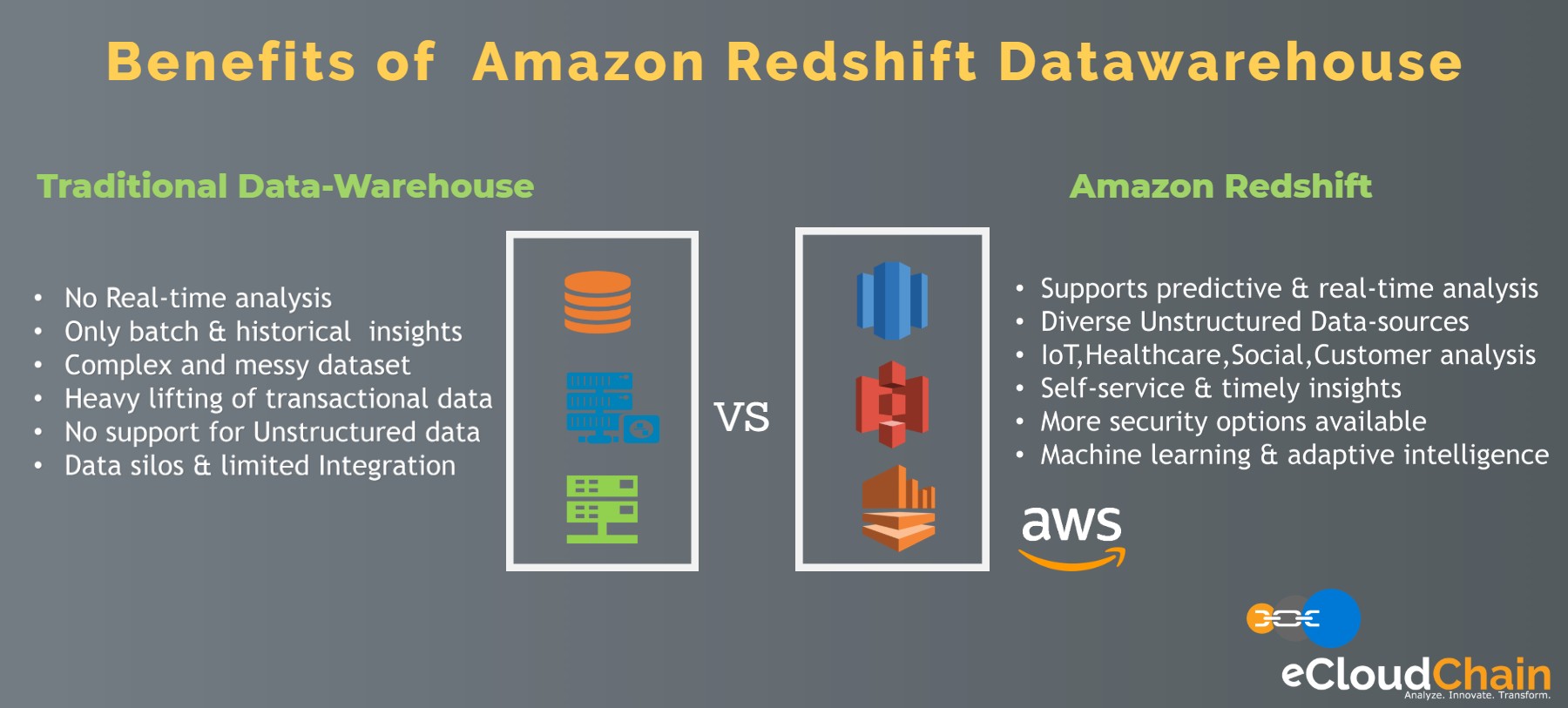

Amazon Redshift uses MPP(Massively Parallel Processing) data warehouse architecture to deliver high throughput between the CPUs and drives by using parallelize and distributed SQL queries loads.

Amazon Redshift is a fully managed petabyte-scale data warehouse solution which supports automatic provisioning of the required infrastructure and manages most of the administrative tasks like backups and replications. It automatically backups up the data to S3 in a different region for disaster recovery. It uses the result caching mechanism for repeat queries to provide significant performance improvements.

Amazon Redshift is a highly secure data warehousing solution for data encryption. It can quickly be configured to use an SSL protocol to encrypt the data in transit and AES-256 encryption protocol for data at rest. With data at rest encryption, all the data stored on to the disk is encrypted including the backups.

The customer has decided to utilize these features of Amazon Redshift for their new data-warehousing solution while continuing to use the existing BI & Data visualization tools.

Challenges

- Time-consuming batch and historical analysis

- No real-time data analysis

- No reliable Disaster Recovery DR solution

- Complex and messy data sets

- The heavy lifting of transitional data

- No support for unstructured data

- Data silos and limited integration

- Poor customer experience

- Poor adapt to change

- High application downtime

Solution Overview

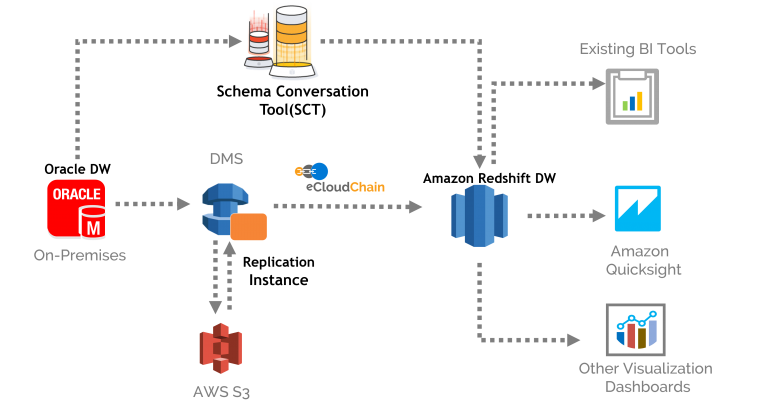

eCloudChain has successfully Migrated the Oracle DB to Amazon Redshift using AWS Data-Migration-Service (DMS) and AWS Schema Conversion Tool (SCT )

Due to heterogeneous migration from Oracle to Amazon Redshift, a schema conversion was required for the compatibility of the database objects and data types, For that SCT was deployed on AWS EC2 as the client application to provide an automated schema conversion of the existing Oracle schema objects into Amazon Redshift compatible schema.

As the outcome of this exercise, a Data migration assessment report is generated by SCT for the schema conversion from Oracle to Amazon Redshift. Besides the detail information on schema conversion objects, This assessment report also provides information on those database objects which are not convertible from Oracle to Amazon Redshift and needs to be handled manually along with other issues or limitations related to object conversion during the migration process.

Manual changes are made on the Oracle objects that could not be converted into the Redshift compatible schema including primary & foreign keys, database triggers, functions, stored procedures, etc.

AWS Data Migration Service(DMS) is installed and configured with the required parameters on the AWS Management Console. DMS provides easy and secure data migration with minimal downtime. A replication instance is created on AWS EC2 that is used by DMS for the migration process. DMS service needs a source endpoint i.e of Oracle, a target endpoint i.e of Redshift, a replication server and a task.

AWS DMS uses one S3 bucket in the same region as the Amazon Redshift as the staging area for the migration. Since DMS keeps the source and the target databases in sync including the ongoing transitional data, It eliminates the need to migrate the delta after the cutover hence after the cutover, all we need to do is to apply the pending transactions before switching over to the Amazon Redshift database. This way the downtime is minimized during the migration.

After the Migration is completed, testing is performed by running a few queries on the Amazon Redshift to ensure that all of the data from Oracle database is migrated to Redshift successfully.Once all the required changes are made, schema conversion is applied to the target database.

Value Proposition

The new data warehouse solution on Amazon Redshift has substantially improved query and data load performance, increase scalability, and reduced the cost